The speed feels like you’ve removed friction from the entire loop: ask → change → verify → iterate. You can keep momentum in a way that’s genuinely hard to do in a big codebase with normal latency.

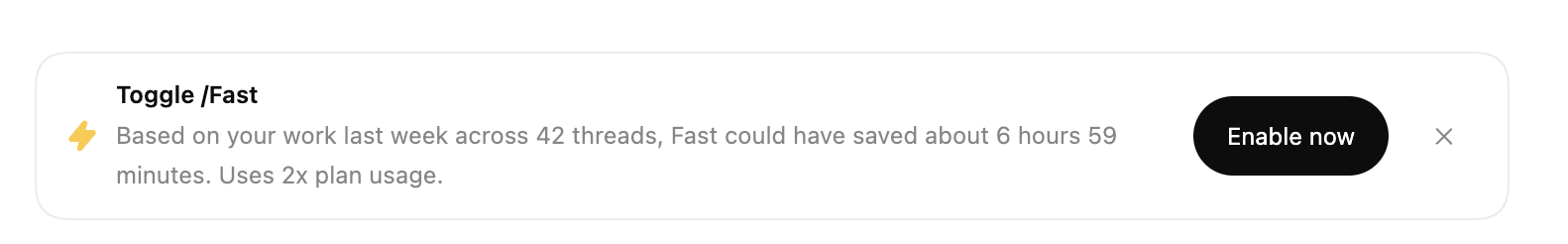

But there’s an obvious trade-off: Fast is expensive.

(And the UI is pretty explicit about it: it uses more plan usage.)

So it’s pushed me into a mindset shift:

In the AI era, context is cost

I posted recently about repo hygiene and log hygiene as a “software factory” principle (the gist: keep the working set tight → keep tasks cheap → keep feedback loops fast). That idea becomes even more relevant when you turn the speed dial up.

Because the faster the agent runs, the more it will:

- traverse files you didn’t intend it to touch

- pull in irrelevant context

- burn tokens/plan usage on noise

- accidentally make “small changes” feel bigger than they are

Fast mode doesn’t change the fundamentals — it amplifies them.

The unsexy optimisation: make the monolith lighter

One of the best ways to offset the cost of Fast is boring, but effective:

Audit the monolith for dead repo assets:

- old CSVs / exports

- screenshots

- temp files

- sample data

- abandoned scripts

- anything that’s not needed by the app, tests, or deploy process

This is less about disk space and more about attention and retrieval. A noisy repo is harder for humans to navigate, and it’s harder for an agent to stay focused. You pay for that noise every time the model “looks around”.

So the play is:

keep the repo lean, so Fast mode stays economically viable.

keep the repo lean, so Fast mode stays economically viable.

Another big lever: tell the model where to look (and where

not to)

This is something I already do, but I’m being even more explicit now:

In the prompt/spec, I’ll state:

- the exact files/classes to inspect

- the boundaries (what to treat as out-of-scope)

- where not to look (folders, legacy areas, unrelated surfaces)

- what “done” looks like (tests + checks to run)

It sounds trivial, but it changes behaviour massively. It keeps the agent inside the smallest possible “working set”, which reduces both:

- execution mistakes

- context/token burn

It’s the same principle as good debugging: start with a hypothesis, constrain the search space, validate quickly.

The meta lesson

Fast mode is incredible — but it makes the boring stuff more valuable:

- clean repo

- clean logs

- clear boundaries

- precise prompts

- strong tests

If you want the speed without the chaos (or runaway cost), you have to run the factory properly.