One of the most underutilised design patterns in startups and scale-ups is the adapter pattern.

I used it today and it reminded me why it’s so valuable: it’s not just “clean code”. It’s commercial leverage.

I used it today and it reminded me why it’s so valuable: it’s not just “clean code”. It’s commercial leverage.

How lock-in happens (and why it hurts later)

Early on, startups get generous free tiers and credits:

AWS/GCP, map providers, email providers, analytics tools, Intercom, HubSpot, etc.

It’s rational to move fast and take the free value.

But as you scale you hit enterprise pricing, quotas, overage charges, and a commercial reality:

you’ve built your product around one provider’s shape.

You’re locked in, and switching becomes painful at exactly the moment it matters most.

Early on, startups get generous free tiers and credits:

AWS/GCP, map providers, email providers, analytics tools, Intercom, HubSpot, etc.

It’s rational to move fast and take the free value.

But as you scale you hit enterprise pricing, quotas, overage charges, and a commercial reality:

you’ve built your product around one provider’s shape.

You’re locked in, and switching becomes painful at exactly the moment it matters most.

The adapter pattern is a way to design for optionality.

The adapter pattern isn’t “premature abstraction”

Done badly, it becomes over-engineering.

The adapter pattern isn’t “premature abstraction”

Done badly, it becomes over-engineering.

Done well, it’s a hedge against lock-in:

- you keep one internal interface

- you implement providers behind it

- you can swap/rotate/fallback without rewriting the whole system

This is especially powerful for external APIs where:

- pricing changes as you scale

- free tiers exist

- reliability varies

- you want redundancy / fallback

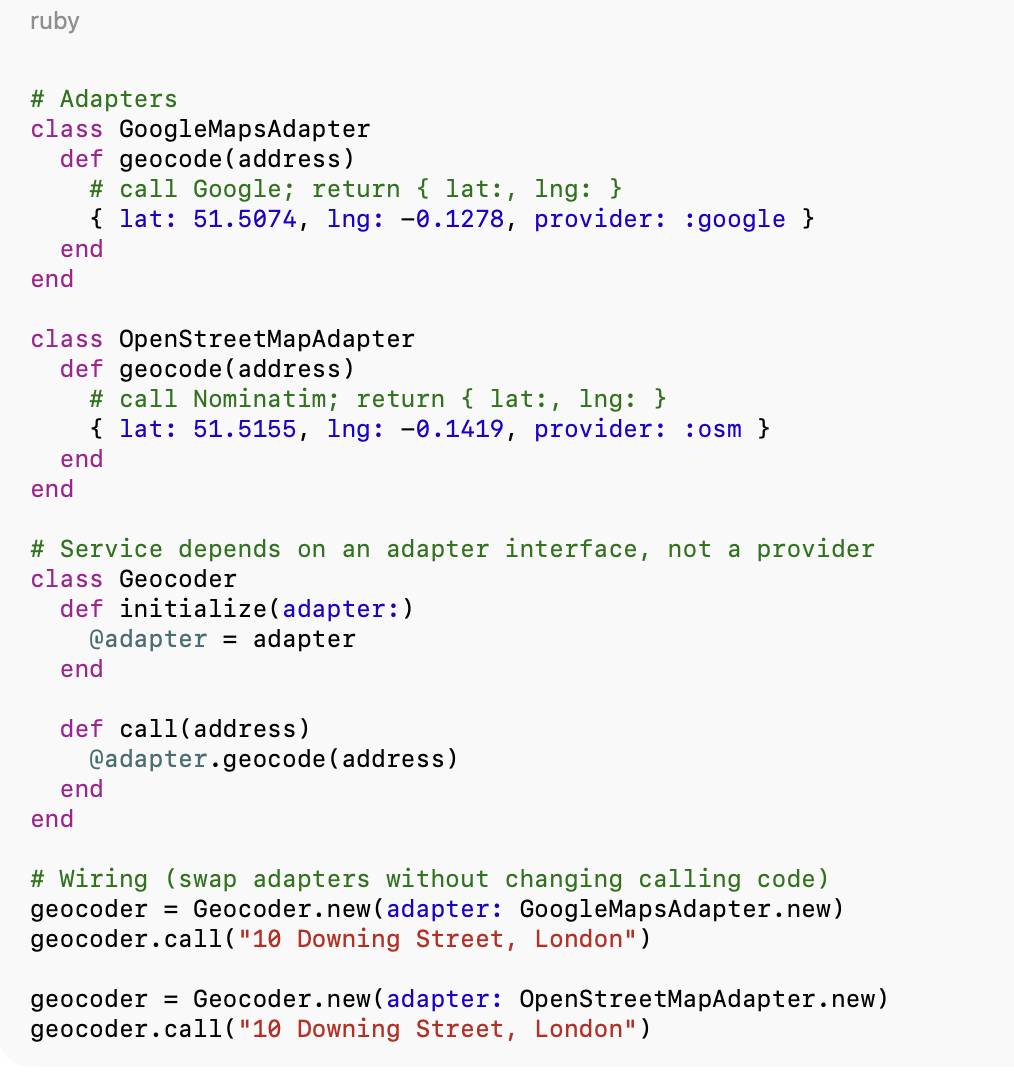

A simple, concrete example: Google Maps + OpenStreetMap

If you have a map-heavy product, you might start with Google Maps because it’s the easiest and most complete.

But as you scale, you might choose rules like:

- use Google for high-confidence geocoding/routing (best coverage, fewer weird edge cases)

- use a cheaper provider for simpler cases to control cost

- rotate usage once you hit a daily threshold (e.g. first 1,000 requests go to Google, the rest go to OpenStreetMap services)

- fail over automatically if a provider is down or rate-limited

This is where an adapter pays off: one internal interface, multiple providers behind it.

For example: Google as the primary, with OpenStreetMap’s Nominatim for geocoding (address → lat/lng) and OSRM for routing/distance (A → B) as the fallback/overflow path.

Adapters make this feasible without spreading “provider thinking” through your whole codebase.

Boilerplate example of what this looks like in Ruby:

Boilerplate example of what this looks like in Ruby:

A surprising AI lesson: it won’t apply patterns unless you tell it to

I used an LLM while working on this, and it didn’t spot the adapter opportunity at first — even though my agent instructions explicitly call out the adapter pattern (along with other OO/design practices).

Why? Because the right answer is… it depends.

There are plenty of times you should not add an adapter:

- if you’ll never swap providers

- if the API isn’t a meaningful cost/risk

- if the domain is stable

But in this case, cost scaling and potential provider rotation made it worth it.

Once I explicitly reminded the model to consider an adapter, it reworked the solution cleanly.

And because I had a test suite around the existing behaviour, the refactor was safe:

- I could prove the old adapter still worked

- I could prove the new adapter worked

- I could add routing logic without fear

That’s the real takeaway for me:

AI makes refactors cheaper, but tests make them safe.

AI makes refactors cheaper, but tests make them safe.

The pattern I’m leaning into

If you’re building a startup product:

- treat key external services as “replaceable”

- design an internal interface

- implement adapters per provider

- add a small routing/fallback layer

- make tests the contract so swapping providers is low risk

It won’t matter for every integration.

But for anything that will become a material cost line or strategic dependency as you scale, it’s one of the cleanest ways to buy optionality early.