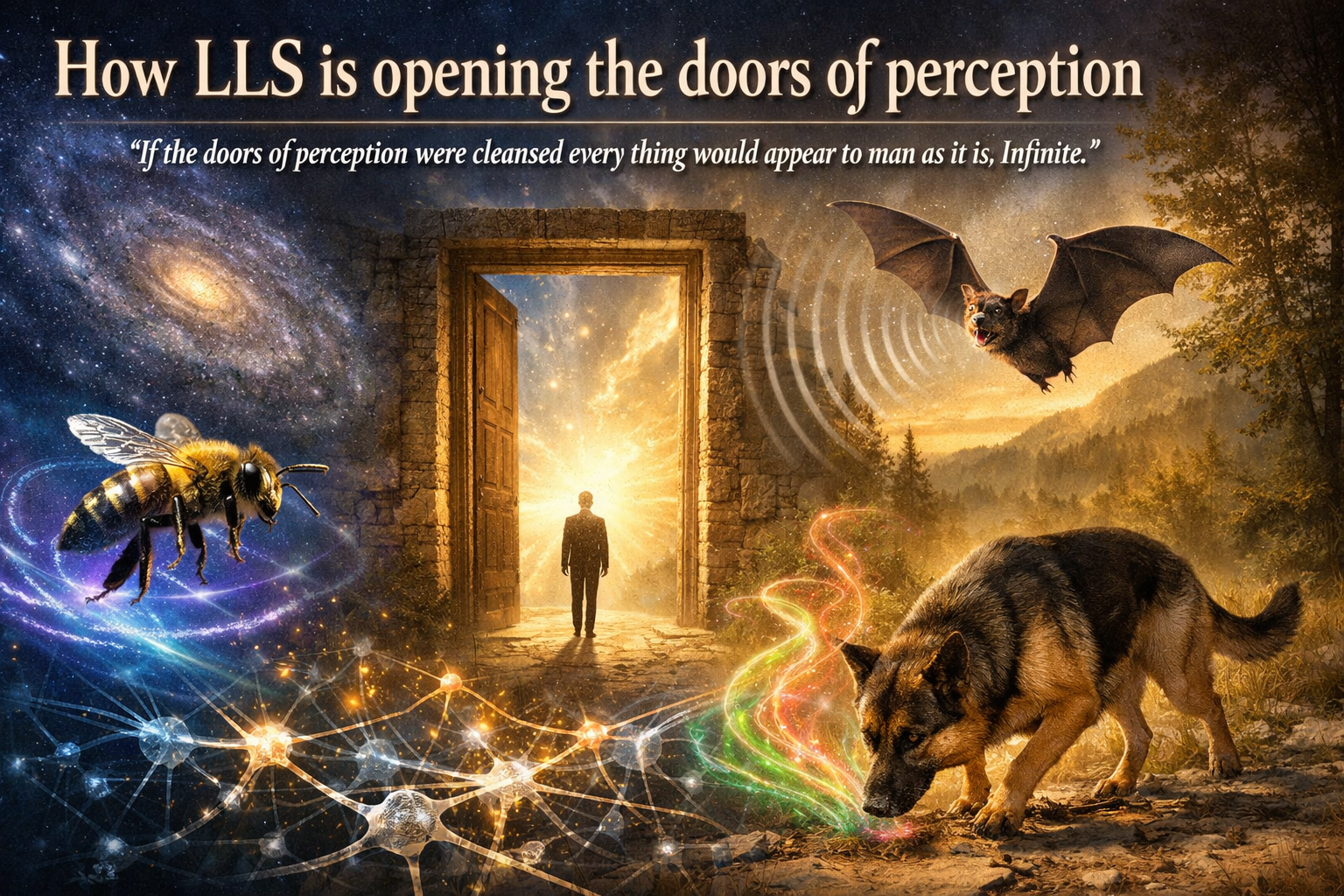

"If the doors of perception were cleansed every thing would appear to man as it is, Infinite."

The Englishman William Blake wrote this in 1790, in plate 14 of The Marriage of Heaven and Hell. Blake had a reputation as a visionary and mystic, someone who saw angels and believed in the supernatural. But this line had nothing to do with visions. It was an observation about how knowledge actually works.

Take a bee, a bat, and a dog. They live in the same world we do, but the bee sees colours we cannot see, the bat hears what is silence to us, and the dog smells a reality that is entirely invisible to us. Biologist Jakob von Uexküll called this the Umwelt: every species lives in its own perceptual world, bounded by what its senses let through. We see what our senses and brain allow, and that is only a fraction of what is actually there.

What most people never consider is that neurons themselves contain no information — what we call knowledge emerges from the trillions of connections between those cells, the synapses. Knowledge is not in the neurons (our brain cells), but between them. Most people never think twice about it.

The world is infinite, but we look through narrow chinks shaped largely by the biases that Nobel laureate Daniel Kahneman documented so thoroughly.

I bought Blake's book because of the biography of Jim Morrison by Jerry Hopkins and Daniel Sugarman, No One Here Gets Out Alive. Most people know Morrison as a singer, a poet, someone who died too young. What almost nobody knows is that according to Sugarman, he had read and knew by heart the five hundred most important philosophical works of the past thousand years. Whether it was exactly five hundred doesn't matter. The point is that Morrison knew the philosophical weight of that line and didn't name his band after Blake because it sounded cool.

The philosophical undercurrent

Philosopher Edmund Husserl formulated, more than a century later and independently of Blake, the same movement as method: the epoché, the deliberate suspension of your automatic interpretive layer so you can see what is actually there before interpretation rolls over it. Back to the things themselves. Aldous Huxley took mescaline, a mind-altering substance derived from the peyote cactus, and wrote a book about it, The Doors of Perception. Morrison knew the philosophical weight of that line and named his band The Doors.

Blake saw the problem but had no method for doing anything about it. Husserl had the method but it stayed locked inside the philosophical atelier. Huxley found, via mescaline, an experience that seemed to break through the limits of ordinary perception, but an experience you cannot transfer is not an instrument. Morrison understood the weight of the line and gave it a name.

And there it stayed, until a few months ago.

The countervoice

Claude Shannon, the man after whom Anthropic named its AI tool, did something different. Shannon published the paper that founded information theory in 1948, A Mathematical Theory of Communication, and opened it with a deliberate choice: "The fundamental problem of communication is that of reproducing at one point either exactly or approximately a message selected at another point. Frequently the messages have meaning." That last sentence — "frequently the messages have meaning" — is where he cuts meaning away. Not by accident, but as a principled decision. Shannon solved the technical problem: do the symbols arrive intact? What those symbols mean, and what they cause, he deliberately left to others.

That was brilliant and it made the entire digital age possible. But it created a blind spot that still shapes our systems seventy years later. We came to think it doesn't matter which lens you look through, as long as the bits are correct.

Shannon didn't narrow the lens Blake described. He left the meaning question deliberately empty. A gap he never intended to fill, but that still needed filling.

A language model does exactly what Shannon described: moving bits with great precision, without any idea what they mean. The system I built reverses that ratio: 98 percent is data, 2 percent is AI, measured by volume — half a million records against a thin layer that only asks questions and cross-references, never interprets. The meaning is not in the model but in the structure. The irony is that I used a language model — precisely the type of system Shannon described — to work in the space he deliberately left empty.

What was always missing

Blake called them mind-forg'd manacles: the brain always takes the familiar road.

Psychologist David Kolb described in 1984 how people learn through experience: something happens, you reflect, you extract something from it, you apply it. An elegant cycle, but one that begins at processing, not at capturing. What happens before you start reflecting stays out of frame.

Husserl asked exactly that question. What is lost in the conversion of experience into knowledge? Experience translated directly into knowledge loses its texture, its ambiguity, the edges that are not yet finished. His epoché was meant to prevent that. Kolb and Husserl are not opposites but complements: Kolb describes how you learn from what you have captured, Husserl describes how you capture before it evaporates.

You have probably decided at some point to look differently. A week later you were looking the same way as before. Not because you didn't mean it, but because a resolution is not an architecture.

The reversal

Blake complained about mind-forg'd manacles, but chains can be reforged into scaffolding. Not by trying harder, but by building the discipline outside yourself. A system that asks you questions you no longer have to remember to ask. That is the movement LLS makes.

When I pay for something in a bookshop, the system asks whether the book is for me or for someone else, who the author is, what the cover looks like, and whether it should go on my reading list. When I spend more than forty euros at a restaurant, it wants to know whether it was lunch or dinner and who I was with, and from the time it already knows what it probably was. It catches the moment before my memory irons it flat into "I was somewhere."

Months later, when the system cross-referenced my journals, check-ins and photos from 2020, it turned out that three projects I had considered unrelated had all started on the same day on Vlieland — and I hadn't seen it in five years.

A second moment, of a different order. One morning the system served me a philosophy prompt about Edmund Husserl. I recognised something but couldn't name it. When I followed that feeling through my own data and the layers I had built, I discovered that my entire information model is structurally congruent with a philosophical architecture from 1913. I had never read Husserl. I had built it independently. Vlieland showed me hidden connections within my own life. The Husserl discovery showed me a hidden connection between my work and an entire philosophical tradition.

The system holds half a million records and tens of millions of connections across twenty years of lived data. Not comparable to the brain in scale — the brain has trillions of connections — but in principle: knowledge is not in the entities but in the connections between them. That is not how I originally designed it, but what emerged on its own once the structure was good enough.

Husserl in the bedroom

Every morning I lie still for thirty seconds with a blood pressure monitor on my arm and a cat pressing her head against that same arm because she has decided that enough is enough with this lying still business. The device wants me not to move and to focus on my breathing. The cat wants precisely the opposite. What happens in those thirty seconds I cannot describe well, except that it keeps happening. Husserl thought you needed years of practice to suspend your automatic interpretive layer and see what is actually there. All I need is a blood pressure monitor and a cat that won't cooperate. The monitor does what my system does: creates the conditions in which something can become visible.

My LLS turned out to solve a philosophical problem

In December 2025 I wrote down what LLS does:

"A lens creates nothing new. The information is already there. A lens focuses, magnifies and reveals patterns. Not by adding something, but by looking at what is already there in the right way."

The connection between Blake and Husserl I didn't discover by reading. My own system served me a daily philosophy prompt and I recognised something I couldn't name. The parallel was hard to miss.

Most knowledge management systems, from Tiago Forte's PARA to Niklas Luhmann's Zettelkasten, do the first well and the second hardly at all. The Life Lens System I designed does both, and does them well.

By now, people from the PKM Summit and beyond have started building their own versions and we are learning from each other. What the impact will be, we won't know for another six months. But it is fascinating and illuminating.

Anyone who wants to see the complete design of how I used the brain as a model for my information architecture, and how that led to the Life Lens System, can find the design and all the thinking behind it at Life Lens System. Start simple: look at what you already have. Your transactions, your photos, your locations — they are already there. The question is not whether you have enough data, but whether you have ever cross-referenced it.

Blake promised that a cleansed lens would show the world as it truly is. Infinite. I don't know if that's true. But I do know that I see things I hadn't seen in twenty years, and that the lens gets cleaner as the structure gets better. Perhaps that's enough.